Table of contents

Key Takeaways

- AI is accelerating malware production, not fundamentally changing how malware behaves at runtime.

- Attackers still must operate within identity, privilege, and execution boundaries that can be observed and constrained.

- Reduced development cost is driving higher attack volume, faster variant churn, and shorter static indicator lifespans.

- Early experiments with LLM-as-C2 exist, but most AI use today happens before deployment, not during execution.

- Kernel-level runtime visibility remains the most durable defensive signal as static indicators degrade.

Introduction

The current conversation around AI and malware is dominated by noise. Security leaders are often told that the threat of AI has fundamentally changed compared to just a year or two ago as large language models can now generate near-production grade code, whether this be for development, remediations, or even malware. That framing gives AI a level of importance that is not usually reflected in real-world incidents.

The speed of malware production has clearly increased. That increase, however, does not change the conditions attackers must operate within once their code is deployed. AI affects how malware is created and run, but it does not remove the need to operate within identity systems, privilege models, and execution boundaries. Whether instructions were written by a human or assisted by a machine, they still have to operate within memory, open network connections, and interact with the filesystem in ways that can be constrained and observed.

The Industrialization of Malice

AI’s most immediate impact on malware is not increased sophistication, but reduced creation speeds, costs, and skill level threshold. The effort required to produce functional malicious tooling has dropped significantly. Capabilities that once required experienced operators are now accessible to low-skill actors with minimal iteration.

This shift has pushed threat activity toward volume. Malware can be regenerated continuously with small structural changes, leading to rapid variant churn and short-lived file identities. Campaigns move faster, indicators expire quickly, and static indicators lose durability as a primary control.

The same cost reduction applies to discovery. AI accelerates vulnerability research, configuration analysis, and reverse engineering of binaries and proprietary components. Attackers only need one exploitable condition to move forward. Defenders, by contrast, must absorb the full cost of scanning code, monitoring dependencies, validating fixes, and deploying changes across production systems. As discovery accelerates, exploitation increasingly happens within the gap between identification and remediation. Another critical aspect of this industrialization is the shrinking gap between initial access and meaningful impact, as rapid environment analysis allows attackers to identify discrepancies and act on them quickly.

AI as the Producer, and Emerging Director, of Malware

AI is already influencing malware in two distinct ways, with very different operational implications:

AI-Written Malware

The first, and by far the more common, is AI-written malware. In this model, large language models are used during development to generate end-to-end malware, as well as phishing content, loaders, droppers, and supporting scripts.

The running malware itself contains no AI components and behaves like traditionally-developed code, meaning the AI’s role ends when the malware is compiled or deployed. Underground tools such as WormGPT and KawaiiGPT are openly marketed for this purpose, but attackers do not require specialized models. Currently, publicly available LLMs can also be prompted to produce functional malicious scaffolding by framing prompts as diagnostics, red team exercises, or security research.

Regardless of the source, the result is static code; payloads do not adapt once deployed. They execute pre-generated logic, just produced faster and with far less effort than before.

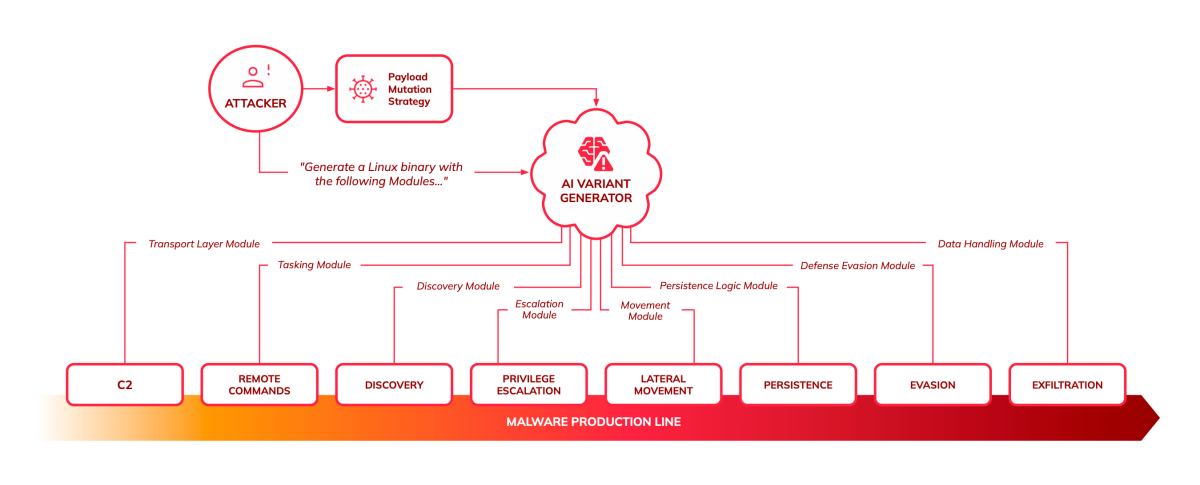

AI-Powered Malware

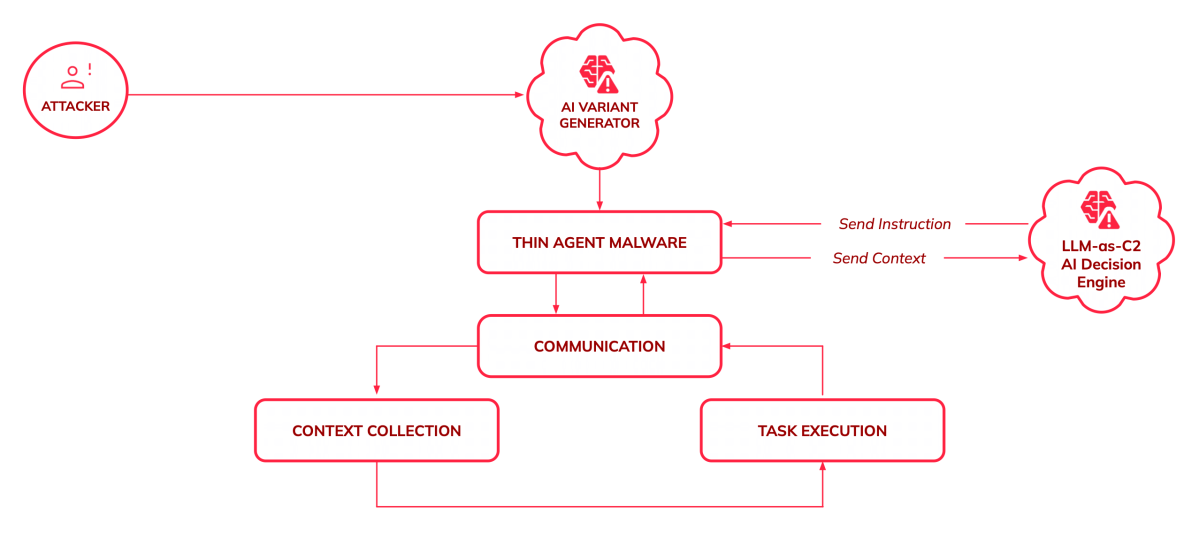

A second, less mature category is the AI-powered malware, where a model is consulted during build time, as well as during execution. This pattern can also be described as LLM-as-C2, reflecting the use of a large language model as a command and control component rather than a traditional scripted backend. In this architecture, the local payload acts as a thin agent, collecting system context and relaying it to an external model, which returns tailored instructions or generated code.

This approach has been observed in a limited number of real-world cases and controlled investigations. Google’s Threat Intelligence Group has documented malware families that query large language models mid-attack to generate commands or assist decision-making dynamically. PromptSteal and PromptFlux show direct mid-execution LLM querying, while related families like QuietVault illustrate how AI tooling is being pulled into the execution phase in adjacent ways.

At a purely architectural level, modern AI assistants such as ClawdBot, MoltBot and others already resemble this model: a thin local component that continuously ships context to an external model and executes returned instructions—distinguished by intent and controls, not by execution mechanics.

Operationally, thin-agent designs reduce local complexity and allow attackers to modify behavior without redeploying code. At the same time, they introduce new dependencies on network access, external services, and response latency. While LLM-as-C2 is not yet a dominant model, it represents a meaningful shift from AI being used purely to produce malware toward AI beginning to influence execution.

No Matter How It’s Written, It Still Runs

Whether a payload was written by hand, generated by an LLM, or guided by a model during execution, it still has to interact with a real system in order to function. In practice, those interactions tend to cluster around a small set of recurring runtime needs.

These are not rules, and not every attack will show all of them. They are simply common patterns observed across many real-world intrusions.

- Understanding the Environment

Malware often begins by reducing uncertainty about where it is running. In practice, this may look like inspecting the current user and process context, checking available privileges, or determining whether it is running inside a container, virtual machine, or cloud workload before proceeding. - Staying Resident

Many attacks attempt to survive beyond a single execution. Depending on access and environment, this might involve registering a scheduled task, modifying a service definition, adding a startup hook, or abusing an application lifecycle mechanism to regain execution after restart. - Coordinating Externally

Attacks that go beyond a single action usually require some form of external coordination. This can appear as outbound network communication used to retrieve instructions, exchange state, or signal progress. In emerging cases, this coordination can resemble LLM-as-C2 patterns, where a thin agent exchanges context with an external model to receive dynamically generated guidance. - Expanding Access

After initial execution, attackers commonly look for ways to broaden their reach. At runtime, this may involve searching the filesystem for cloud credentials, reading environment variables for tokens, or interacting with metadata services to inherit additional identity or permissions. - Touching and Moving Data

Whether the objective is theft, disruption, or leverage, malware must interact with sensitive resources and move information out of its original context. That interaction, followed by some form of exfiltration or misuse, produces observable runtime behavior even when the payload itself is unique.

These patterns are not new, and they are not specific to AI-driven threats. What has changed is the growing frequency in which they appear. As malware volume increases and static indicators lose reliability, these runtime interactions become the most consistent way to understand what an attack is actually doing.

Looking Ahead to 2026

Based on the dynamics discussed throughout this article, the following reflect how these trends are expected to develop through 2026.

- Malware volume will continue to grow.

AI keeps lowering the cost of generating new variants, favoring spray-and-pray campaigns and rapid iteration over carefully engineered payloads. - AI-written malware will remain the dominant pattern.

Most real-world use of AI will stay focused on producing end-to-end malware and constant variations faster, not on autonomous decision-making at runtime. - AI-powered execution will remain experimental, but persistent.

LLM-as-C2 and thin-agent designs will continue to surface in limited cases as attackers explore ways to externalize logic and reduce redeployment. - The gap between discovery and exploitation will keep shrinking.

AI-assisted vulnerability research and reverse engineering will continue to compress timelines faster than organizations can reliably patch. - Kernel-level visibility will age better than static controls.

As file-based indicators lose durability, observing behavior at runtime and at the kernel level will remain the most stable source of defensive signal.

How Orca Can Help

The Orca Cloud Security Platform addresses AI-assisted threats by focusing on runtime behavior rather than static indicators. Using eBPF-based telemetry, Orca observes execution patterns at the kernel level, such as process behavior, network connections, file access, and privilege escalation. This provides visibility into what malware is actually doing, regardless of how it was written or whether it operates as a thin agent consulting an external model.

As malware volume increases and variants proliferate faster than signatures can keep pace, Orca’s approach remains effective by anchoring detection in execution mechanics rather than file-based identifiers. By correlating runtime telemetry with asset context, including exposure, criticality, and attack path reachability, Orca enables teams to investigate and respond based on actual threat activity in real time, helping organizations detect suspicious behavior before attackers can expand access or exfiltrate data.

FAQs

AI is already being used to accelerate malware development, including the creation of complete, end-to-end malware. In practice, large language models are often used to generate phishing content, loaders, droppers, and supporting components that are combined into a working payload. The main shift is not new attack techniques, but the ability to produce and iterate on malware faster and with less specialized skill. The result is higher volume and quicker evolution, rather than fundamentally different behavior at runtime.

So far, only in limited cases. Most AI use still happens before deployment. That said, there are early examples of malware consulting large language models during execution to generate commands or assist decisions. These cases are uncommon today, but they show how AI may begin to influence runtime behavior rather than just code creation.

LLM-as-C2 describes using a large language model as part of the command-and-control process instead of a fully scripted backend. In this model, malware sends execution context to an external AI service and receives instructions or generated logic in return. This allows behavior to change without modifying or redeploying the local payload.

A thin agent is a payload that contains minimal embedded logic. The local code often looks generic and benign, such as a simple Bash or Python script or a small utility-style binary. It does not encode explicit attack logic and may not resemble known malware patterns. Its role is to execute instructions, collect context, and communicate externally, while decision-making lives outside the payload.

Because neither the code nor the communication necessarily looks malicious in isolation. Thin-agent code may not be flagged by static analysis or signatures because it lacks hard-coded malicious behavior. When paired with LLM-as-C2, the agent may communicate through standard APIs that are widely used by legitimate applications, which may not be flagged by static or reputation-based network controls. As a result, detection shifts away from inspecting code content or destinations and toward observing runtime behavior: how the process uses identity, accesses resources, communicates externally, and acts within the system.