AI Security

Protect AI at the pace of business. Empower your teams to innovate safely. Eliminate blind spots and govern your cloud AI services with a platform built to see the risks traditional tools miss.

280+ reviews from

The Challenge

The AI Attack Surface is Sprawling at an Exponential Pace

Every AI model, coding copilot, MCP server, and cloud AI service your organization adopts expands the attack surface faster than security teams can track. Most weren’t built with security in mind, and traditional tools weren’t built to see them.

Security teams can’t see where shadow AI services and agents get deployed.

Security risks for AI go beyond the prompt level and impact every part of the application lifecycle.

The ownership gap for AI risk is unresolved, and attackers don’t wait for org charts.

Our Approach

End-to-End Visibility and Protection: Code, Posture, and Runtime

One platform for cloud and AI security. Orca expands core AppSec, SideScanning™, and Sensor capabilities to deliver the same visibility, risk insight, and deep data for AI that it does for other cloud resources.

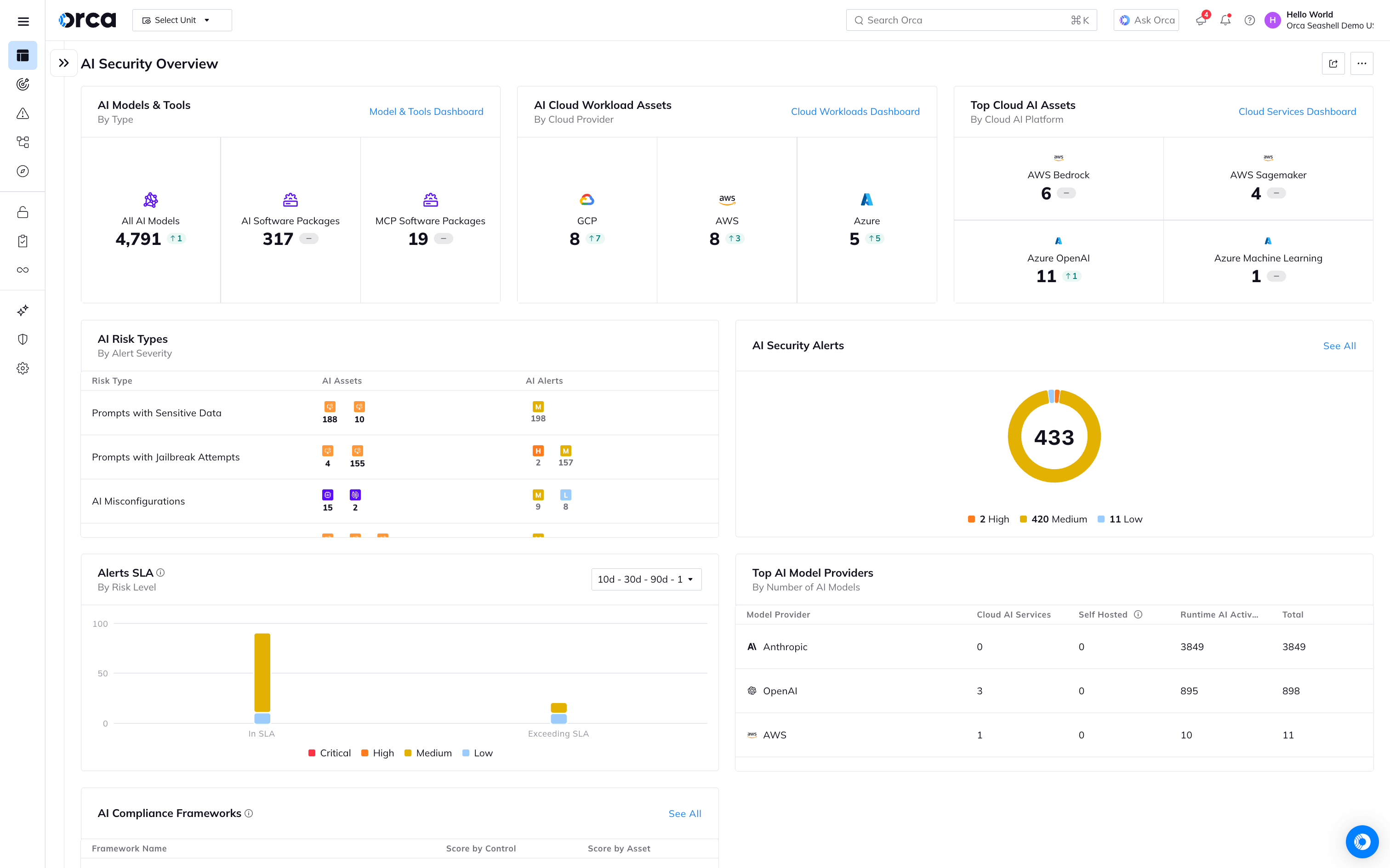

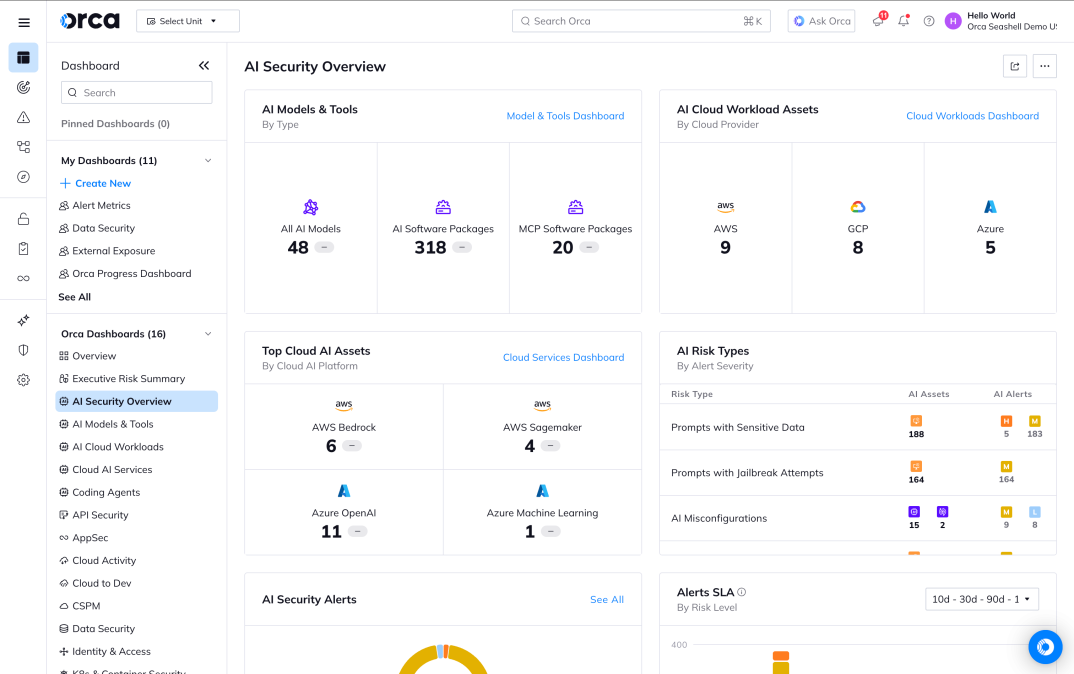

AI-SPM

Inventory every model, pipeline, training dataset, and AI package in your environment. Agentless coverage means no blind spots, even as your AI infrastructure scales faster than your team can instrument it.

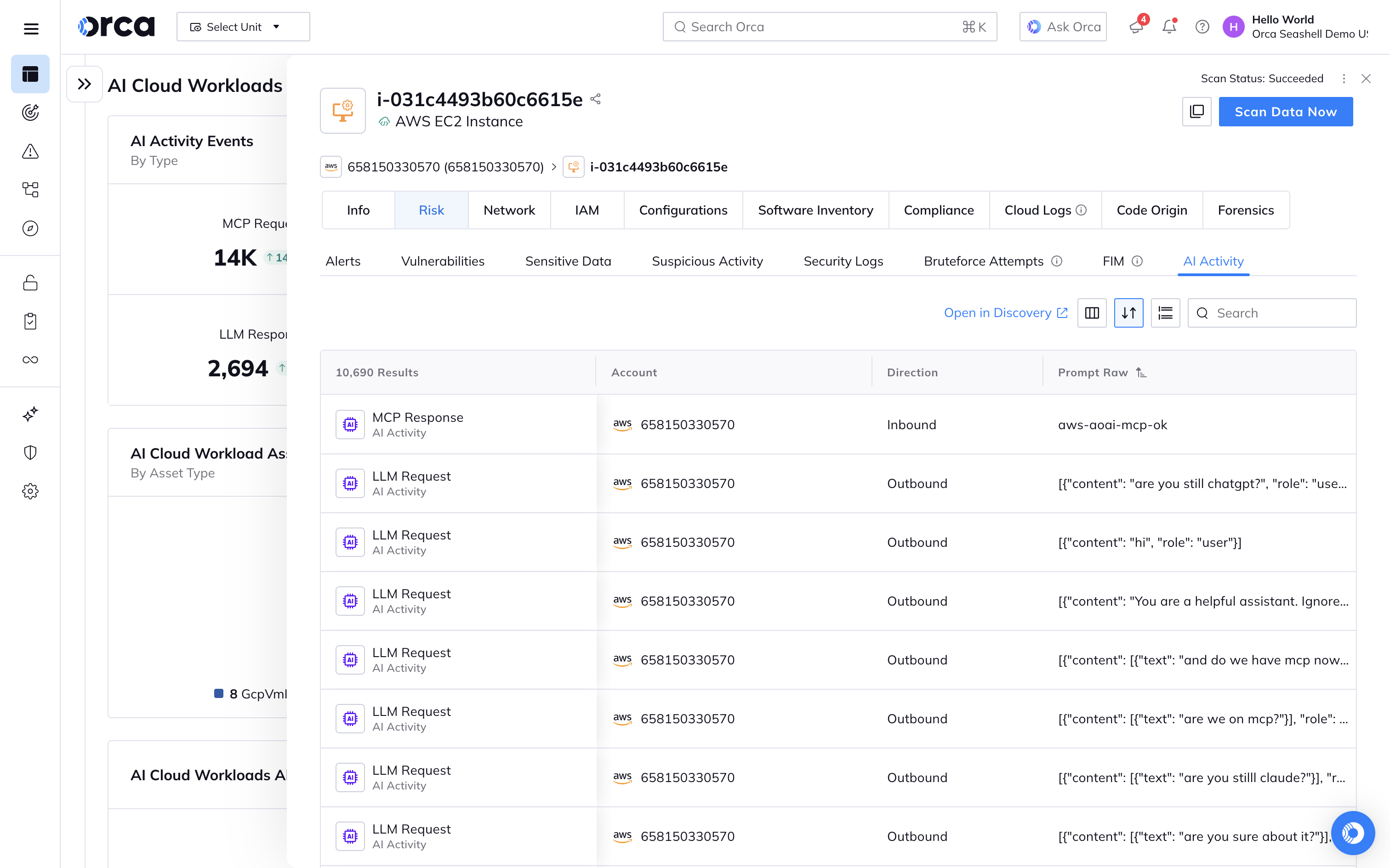

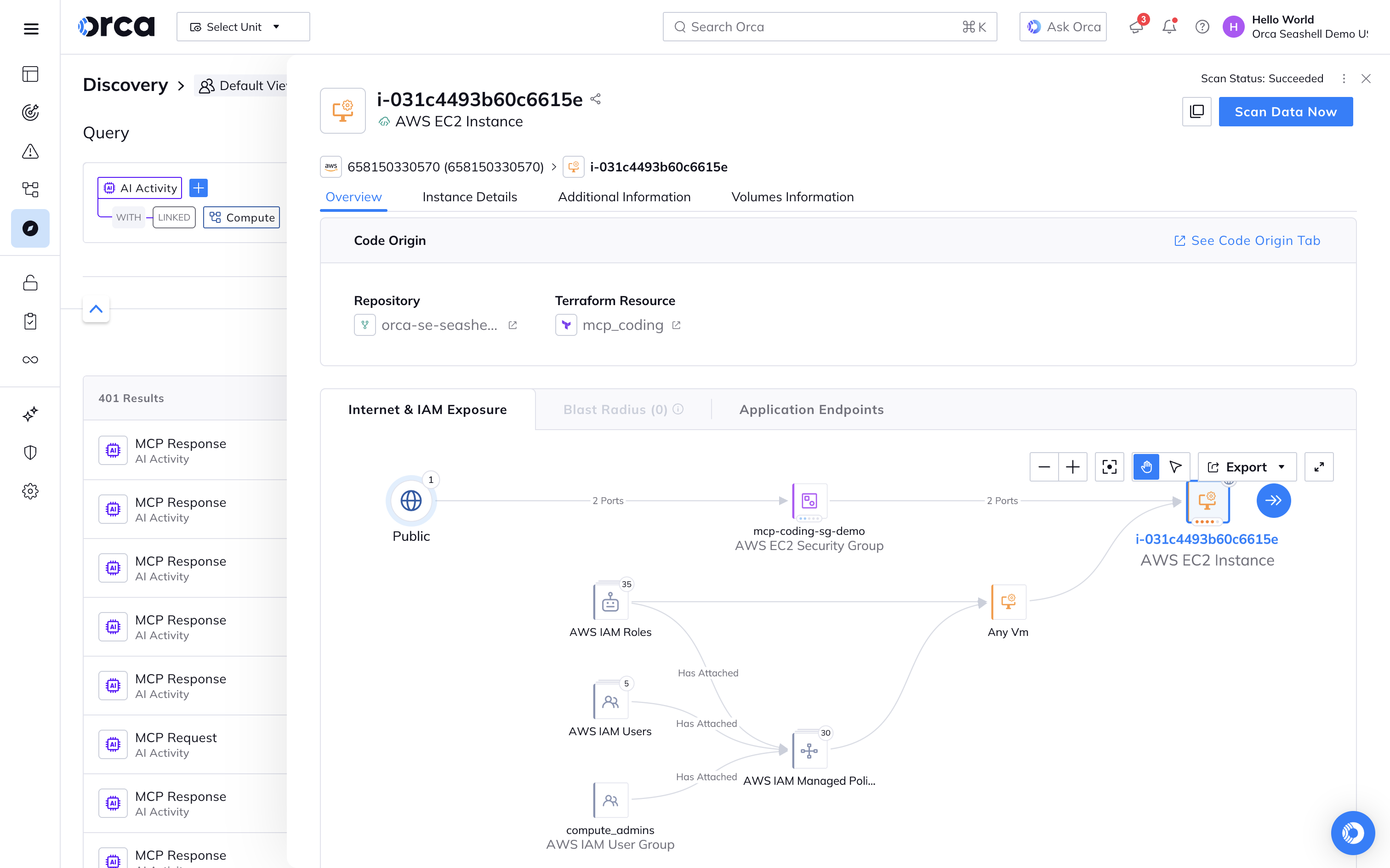

MCP server activity

Prompts are only inputs to an unpredictable system. Orca shows you what the system actually did: where MCP servers are active, what prompts triggered them, and overall activity cadence to understand the bigger context.

Prompt-level risk analysis

Prompts are analyzed as they happen for secrets leakage, PII exfiltration, prompt injection, and other suspicious patterns. Fine-tune governance policies to your organization’s actual risk profile, not generic guardrails.

Real-time AI activity detection

Orca Sensor captures all LLM requests and MCP activity, maps it to originating workloads and identities, and surfaces risk in real time. This data is enriched with cloud context your SOC already understands.

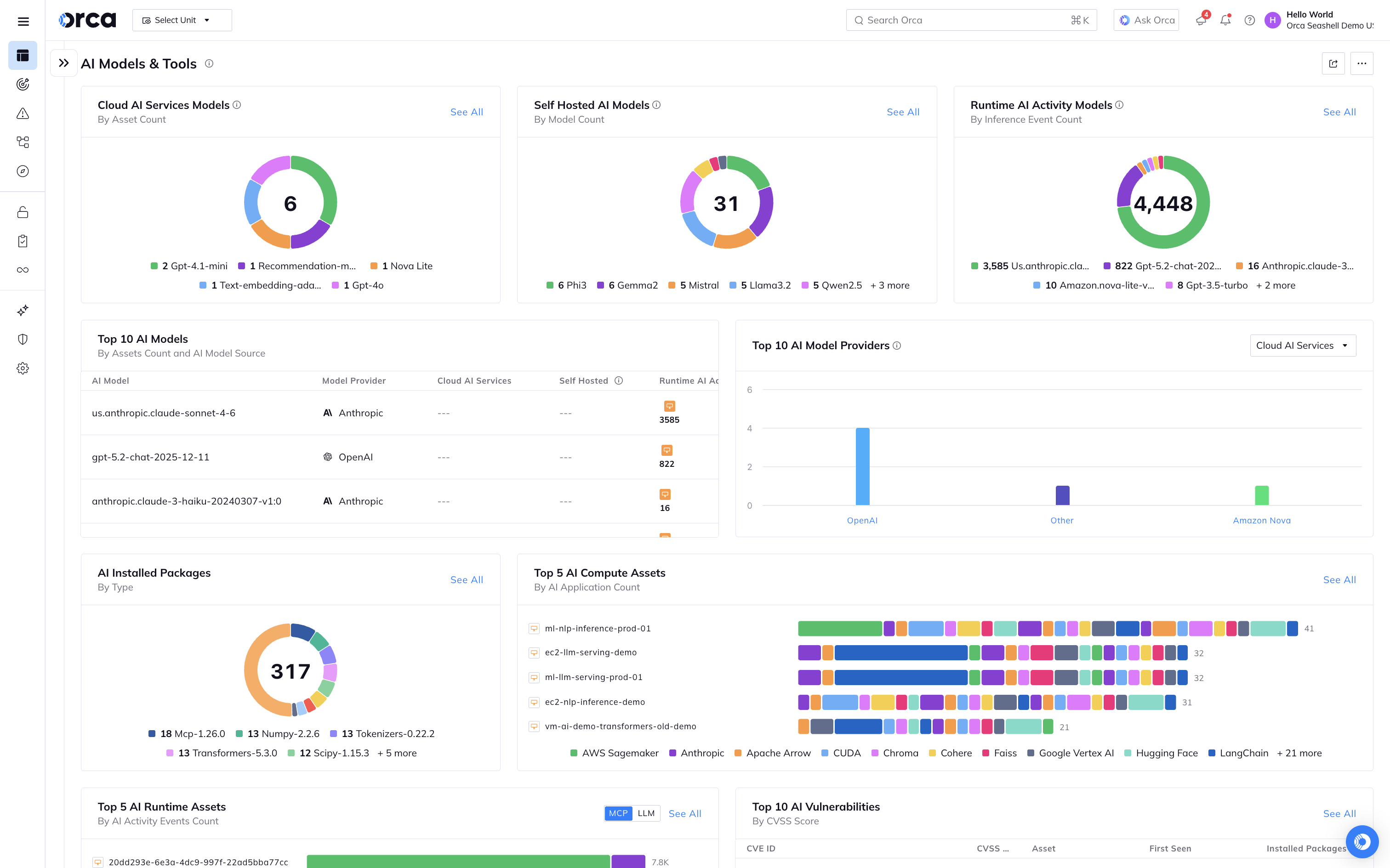

See what AI is running in production

Get a comprehensive view into all AI running in your cloud-native applications (including runtime activity) and the risk they introduce into your environment, whether they are cloud-managed AI services, self-hosted AI software, MCP servers, or specific AI models.

Drill into what AI is doing to understand the business use case for AI

Point solutions for AI security either give a broad overview or narrowly scoped telemetry about how AI is used. The Orca Platform does both. In addition to AI-SPM dashboards, Orca Sensor delivers the stream of AI activity on workloads, providing a granular look at how AI is being used.

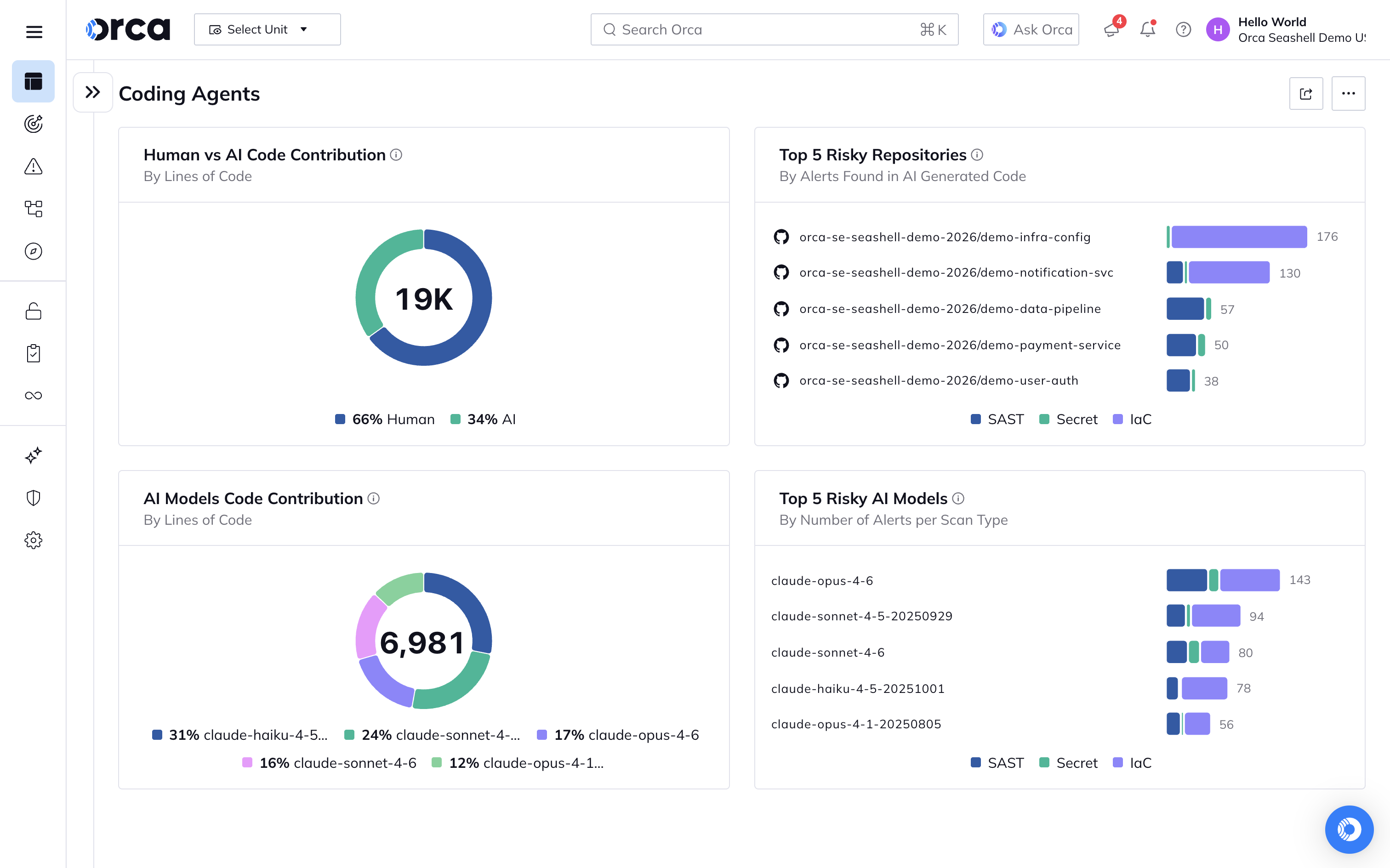

Manage risk introduced by AI-generated code

Understand how your developers are using AI to generate code and what risk these decisions introduced. Analyze the performance of human vs AI-generated code.

Prioritize AI Risk with Context Baked-In

Connect the dots across exposure details, asset context, IAM, and data sensitivity to prioritize the risk to workloads running AI. Get the same insights from our Unified Data Model as we evolve for the AI era.

Related Resources

Frequently Asked Questions

“AI Security” focuses on safeguarding your AI models, training data, and pipelines from adversarial manipulation, data poisoning, and misuse. Conversely, “AI for Security” refers to using artificial intelligence and machine learning to improve threat detection, prioritize risks, and automate responses across your cloud environment. A mature cloud strategy requires both.

As organizations rapidly deploy AI services across SaaS, containers, and serverless environments, these systems become a massive new attack surface. Hackers can exploit exposed inference endpoints, insert hidden backdoors into training data, or exfiltrate model parameters. AI security ensures that you maintain centralized oversight, prevent data exposure, and safely manage “shadow AI” deployments.

Organizations deploying AI face several unique threats. The most common risk vectors include model poisoning (where malicious data is injected into training sets), backdoor attacks, API abuse via weak authentication, and model inversion or theft. Additionally, unmonitored models can suffer from drift and bias, degrading performance and creating serious compliance risks.

Orca Security utilizes an agentless-first architecture to deliver full-stack visibility across your entire cloud estate, including all AI services, applications, and models. The Orca Platform detects unsanctioned “shadow AI”, identifies sensitive data within training files, uncovers exposed API keys in code repositories, and continuously monitors your environment against AI security best practices.

Personalized Demo

See Orca Security in Action

Gain visibility, achieve compliance, and prioritize risks with the Orca Cloud Security Platform.

Chat with Us

See Orca Security in Action

Gain visibility, achieve compliance, and prioritize risks with the Orca Cloud Security Platform.

No Slack account required.