Table of contents

- Defining AppSec: Protection Across the Full Lifecycle

- The Business Stakes: Why AppSec is Critical in 2026

- The 3 Stages of Modern Software Development

- The 5 Pillars of Application Security Risk

- The Industry Standard: OWASP Top 10

- From Checkpoints to Continuity: Integrating Security into the SDLC

- The Challenge with Traditional AppSec

- Modern Application Security: Connecting the Pieces

- The Growing Impact of AI on Application Security

- Bringing It All Together

- How Orca Can Help

- Application Security (AppSec) FAQs: Your Top Questions Answered

Imagine you built a LEGO castle.

It has walls, towers, gates, and hundreds of individual pieces working together. From the outside, it looks complete and secure. But if you look closer:

- Some bricks are fragile and easy to break

- A few doors don’t lock properly

- There are loose bricks you didn’t realize that could fall apart easily

- And some pieces came from other sets and don’t quite match

Now imagine this castle is constantly being built and rebuilt, with new pieces added, old ones replaced, entire sections redesigned every day.

That’s what modern applications look like.

Application Security (AppSec) is the practice of making sure that the castle (i.e. your applications) can’t be broken into, regardless of how fast it changes or how complex it becomes.

Defining AppSec: Protection Across the Full Lifecycle

Application security is the process of identifying, understanding, and fixing security risks in software throughout the entire development and production lifecycle. At its core, it answers three fundamental questions: what exists within an application, how it could be exploited, and how those risks can be remediated.

Historically, AppSec focused primarily on code. But modern applications are made up of much more than just what developers write. They include open-source components, APIs, infrastructure, cloud services, AI-driven functionality, and the way applications behave at runtime. As a result, the scope of application security has expanded significantly.

Today, application security spans the entire development lifecycle. From the IDEs where code is written, to the CI/CD pipelines where applications are built and deployed, to the environments where they ultimately run. It also includes the governance processes and policies that ensure security is consistently applied across teams and workflows.

Which means securing an application requires understanding not just how it’s built, but how it moves from development to production, and how it runs once it’s live.

The Business Stakes: Why AppSec is Critical in 2026

Applications are now the primary way organizations deliver value. They handle sensitive data, power customer experiences, and connect directly to critical systems.

At the same time, the way applications are built has fundamentally changed. Teams are shipping continuously, relying heavily on open source, APIs, and third-party services, and increasingly using AI to generate code. Applications are also deeply tied to cloud infrastructure.

The result is simple. Development is faster, but also far more complex.

Security can no longer rely on a single checkpoint. When code is constantly changing and environments are constantly evolving, gaps appear quickly. Without the right visibility and processes, applications end up being built faster than they can be secured.

2026 State of Application Security Report

The 3 Stages of Modern Software Development

To understand why application security has become more difficult, it helps to look at how applications are actually built.

Modern applications aren’t created once and left alone. They move through stages, from development to deployment to runtime, and continue to change after they go live. Along the way, developers, pipelines, infrastructure, and third-party components all introduce risk.

Instead of a single build process, it’s an ongoing system where changes are constantly being made and pushed forward.

Stage 1: Code & Development (Shift Left)

Everything starts with code. Developers build and test applications in IDEs, often pulling in open source libraries, APIs, and AI-generated code to move faster.

This is where many risks begin. Vulnerabilities, insecure dependencies, and exposed secrets are often introduced early, long before the application is fully assembled. This is where the concept of shift left comes into play, focusing on identifying risks as early as possible, where remediation is typically faster, easier, and less costly.

Stage 2: Build & Deploy (CI/CD Pipeline)

Once code is written, it moves into the CI/CD pipeline, where it is tested, packaged, and deployed.

At this stage, infrastructure is defined, containers are created, and configurations determine how the application will run. If issues aren’t caught here, they are carried directly into production.

Speed increases, but so does the risk of misconfigurations, exposed secrets, and vulnerable components moving forward unchecked.

Stage 3: Production & Runtime (Shield Right)

Once deployed, the application is live and interacting with users, data, and other systems.

This is where context becomes critical. Not every vulnerability is exploitable or reachable. What matters is how the application actually behaves and what is exposed in a real environment.

Understanding runtime behavior is what allows teams to focus on the risks that truly matter.

Taken together, these stages show why application security can no longer be treated as a checkpoint with siloed tools and approaches. It must account for how applications are built, how they are deployed, and how they behave once they are running.

The 5 Pillars of Application Security Risk

To understand application security, it helps to break it down into the different types of risks that can exist within an application. Think of each of these as a different way your LEGO castle could be compromised.

AI Security Solution Brief

Vulnerabilities in Code (SAST)

Static Application Security Testing (SAST) analyzes source code to identify issues like injection flaws, insecure logic, or unsafe functions.

It’s often built directly into the developer workflow so issues can be caught early, before they make it into production.

In the LEGO castle, this is like inspecting each brick before you use it. You’re checking for cracks, weak spots, or defects before it becomes part of the structure.

But there’s a limitation. Even if every individual brick looks fine, you still don’t know how the full castle will hold up once everything is assembled and under pressure.

Open Source and Dependency Risk (SCA)

Modern applications are built using a mix of open source libraries and third-party components. Software Composition Analysis (SCA) identifies known vulnerabilities within those dependencies and provides visibility into the components and versions used across an application.

This helps surface inherited risk, but it doesn’t always provide context on how those components are used or whether they are reachable in execution.

In LEGO terms, this is like pulling in pieces from other sets without knowing how strong or reliable they are. They may look fine on their own, but some weren’t designed to support or secure the structure the way you’re using them.

While SCA can highlight known issues within these pieces, it often lacks the context to determine whether those vulnerabilities are actually reachable or invoked within your specific application.

Exposed Secrets

Some risks don’t require exploiting a vulnerability. Exposed secrets like API keys, tokens, and credentials can give direct access to systems if they are discovered in code, configuration, logs, or other accessible locations, without requiring a traditional exploit.

These often show up in code, configuration files, or pipelines, sometimes unintentionally, and can go unnoticed, such as historical secrets buried in Git commit history.

In the LEGO castle, this isn’t about weak bricks, it’s about leaving the keys to the front gate out in the open. No need to break through the walls if someone can just walk in.

Secrets detection helps find these exposures, but they’re still one of the most common and impactful risks teams face.

Infrastructure and Configuration Risk (IaC Security)

Applications rely on infrastructure defined through code. Misconfigurations in how that infrastructure is defined, deployed, or changed after deployment can expose an application, even if everything else is built correctly.

This often looks like overly permissive access, public exposure, or insecure defaults.

In the LEGO castle, this is like building strong walls and reinforced towers, but leaving the drawbridge permanently down. The structure itself is solid, but the way it’s set up makes it easy to access.

IaC scanning helps identify these risks earlier in the development lifecycle, often as part of CI/CD pipelines and governance controls, so they can be addressed before deployment.

Runtime Behavior and Reachability

Not every vulnerability actually matters. What matters is what’s exposed, what’s reachable, and what an attacker could realistically exploit.

A vulnerability is reachable if there is a real path to it. This can be determined through code (static) reachability, where there is a valid execution path to the vulnerable code within the application, or runtime (dynamic) reachability, where the vulnerable component is accessible in a running application.

A vulnerability is exploitable if that reachable issue can realistically be used in a real-world scenario by an attacker under the right conditions.

In the LEGO castle, this is the difference between a cracked brick buried deep inside a sealed wall and a visible gap along the main path to the gate. One exists, but can’t be accessed. The other can be reached and used to get inside.

Runtime context is what brings this into focus. It shows how the application actually behaves, what is exposed, and how components connect in the real world.

The Industry Standard: OWASP Top 10

The OWASP Top 10 is one of the most widely recognized frameworks for understanding application security risks. It highlights common categories of vulnerabilities, such as broken access control, injection flaws, and security misconfigurations.

For many organizations, it serves as a shared language for identifying and discussing application security issues across teams.

From Checkpoints to Continuity: Integrating Security into the SDLC

Application security works best when it is embedded across the entire software development lifecycle, rather than introduced as a final checkpoint.

This means integrating security directly into the environments where developers write code, the pipelines where applications are built and deployed, and the governance processes that define how security is consistently enforced. It also extends into production, where understanding how applications behave at runtime highlights real risk.

In the LEGO castle, this is like checking each piece before it is used, validating the structure during assembly, and continuously monitoring the castle after it is built.

When these stages are disconnected, issues slip through. When they are integrated, security becomes part of how software is built, not an obstacle to it.

The Challenge with Traditional AppSec

Many application security tools still operate within silos, focusing on individual parts of the lifecycle rather than the system as a whole.

Code scanning, pipeline checks, and cloud monitoring each provide value, but they don’t connect. As a result, teams get a high volume of findings without the context to understand what actually matters. This leads to alert fatigue, slower remediation, and gaps in visibility.

It’s like having separate inspectors for bricks, doors, and bridges without anyone mapping how they connect into the single castle that is being constructed.

Modern Application Security: Connecting the Pieces

Modern AppSec focuses on connecting signals across the entire application lifecycle.

Instead of viewing risks in isolation, it brings together insights from development, pipelines, environments, and runtime behavior to create a complete picture of how an application is built and how it actually operates.

With this level of context, teams can better understand what is actually exploitable, trace issues back to their source, and prioritize what matters most. This is often described as cloud-to-dev visibility.

The Growing Impact of AI on Application Security

AI is accelerating how applications are built. Code is generated faster, dependencies are introduced more frequently, and new integrations are constantly being added. This increases productivity, but it also introduces new risks.

Generated code can include insecure patterns, lack of clear ownership or provenance, and introduce vulnerable or outdated dependencies. AI services introduce new credentials and access points. Prompt injection creates new ways to expose sensitive data.

In the LEGO castle, it’s like having a system that builds new sections instantly, but doesn’t always ensure they’re secure.

As development speeds up, visibility and context become even more important.

AI for Security vs. Security for AI

Bringing It All Together

Application security today is about more than scanning code.

Risk exists across every layer of an application, from vulnerabilities in code and open source dependencies, to exposed secrets, misconfigured infrastructure, and runtime behavior. These risks are introduced at different stages, from the IDE to the CI/CD pipeline to the environments where applications ultimately run.

The challenge is that these layers are deeply connected, but often treated separately. A vulnerable dependency may only become exploitable if it’s actually reachable in runtime. A misconfiguration can expose an otherwise secure application. And an exposed secret can provide direct access without needing to exploit a vulnerability.

Effective application security requires bringing these pieces together. It connects insights across the lifecycle, focuses on what is actually exploitable, and enables teams to trace issues back to their source and fix them quickly. Without this connected approach, security remains fragmented, creating gaps in visibility, slowing remediation, and making it difficult to keep up with the pace of modern development.

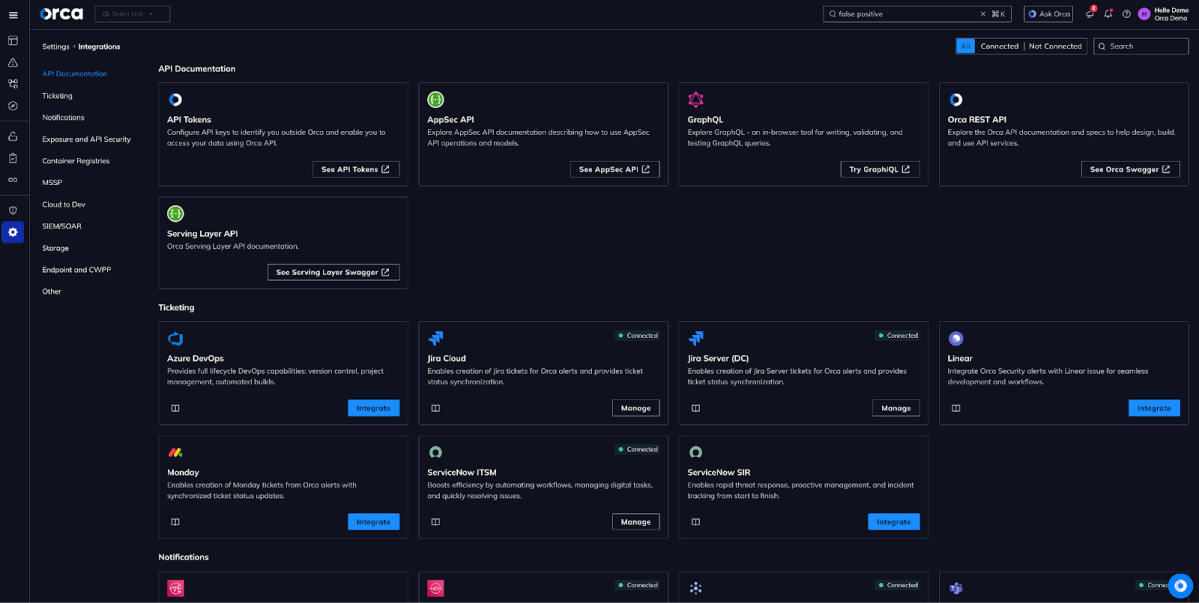

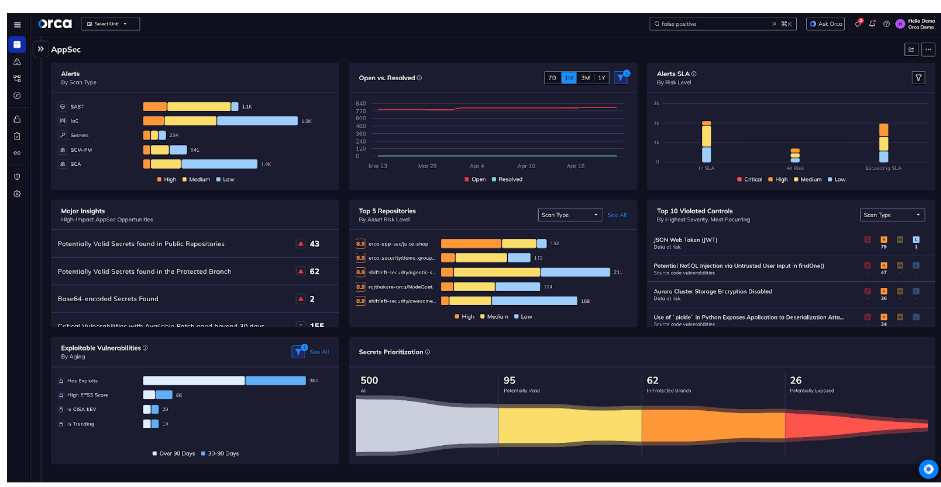

How Orca Can Help

Modern application security requires a connected approach across development, cloud, and runtime. Most organizations, however, still operate across fragmented tools and disconnected insights, making it difficult to understand which risks actually matter, how they can be exploited, and where they originate. Orca brings these pieces together by providing unified visibility and context from cloud to dev, allowing teams to see risk not as isolated findings, but as part of a complete system.

With this context, teams can identify vulnerabilities, secrets, and misconfigurations across code, pipelines, and cloud environments, understand which risks are actually reachable and exploitable in runtime, and trace them back to their source in code. This makes it possible to prioritize remediation based on real-world impact and enables developers to fix issues quickly within the tools and workflows they already use.

By connecting runtime insight with development context, Orca helps teams move beyond managing alerts and focus on reducing real, exploitable risk.

Application Security (AppSec) FAQs: Your Top Questions Answered

Application Security (AppSec) focuses on securing code, dependencies, secrets, and runtime behavior. Cloud Security protects infrastructure and services, while Network Security controls traffic and access between systems.

AppSec centers on how applications are built and exploited, while cloud and network security focus on the environments around them.

AI-generated code can introduce insecure patterns, vulnerable dependencies, and unverified logic at scale.

Because it is created and deployed quickly, traditional review processes often miss these risks. Securing it requires integrating automated scanning and validation directly into the CI/CD pipeline.

A Software Bill of Materials (SBOM) is an inventory of all components and dependencies in an application.

It provides visibility into open source usage, helps identify known vulnerabilities, and supports supply chain security, making it increasingly required in many regulatory, compliance, and enterprise procurement environments.

Prioritization starts with reachability, identifying whether a vulnerability is actually accessible through code paths or in a running application.

Exploitability then determines whether it can realistically be used in a real-world attack.

Together, they help teams focus on risks that truly matter.

Table of contents

- Defining AppSec: Protection Across the Full Lifecycle

- The Business Stakes: Why AppSec is Critical in 2026

- The 3 Stages of Modern Software Development

- The 5 Pillars of Application Security Risk

- The Industry Standard: OWASP Top 10

- From Checkpoints to Continuity: Integrating Security into the SDLC

- The Challenge with Traditional AppSec

- Modern Application Security: Connecting the Pieces

- The Growing Impact of AI on Application Security

- Bringing It All Together

- How Orca Can Help

- Application Security (AppSec) FAQs: Your Top Questions Answered